Hello, I am Kakeya, the representative of Scuti.

Our company specializes in Vietnamese offshore development and lab-based development with a focus on generative AI, as well as providing generative AI consulting services. Recently, we have been fortunate to receive numerous requests for system development in collaboration with generative AI.

Meta’s release of the latest LLM “Llama 3” as open source has become a hot topic. I immediately tried Llama 3 through a service called Groq, which allows for ultra-fast operation of LLM inference. I will share the process up to confirming its actual operation. Llama 3 was impressive, but I was astonished by Groq’s overwhelmingly fast response…!

Table of Contents

- About Groq

- About Llama 3

- Trying out Llama 3 with Groq

- Testing the browser version of Chatbot UI

- Testing the local version of Chatbot UI

About Groq

What is Groq?

Groq is a company that develops custom hardware and Language Processing Units (LPUs) specifically designed to accelerate the inference of large language models (LLMs). This technology is characterized by significantly enhanced processing speeds compared to conventional hardware.

Groq’s LPUs achieve inference performance up to 18 times faster than other cloud-based service providers. Groq aims to maximize the performance of real-time AI applications using this technology.

Additionally, Groq has recently established a new division called “Groq Systems,” which assists in the deployment of chips to data centers and the construction of new data centers. Furthermore, Groq has acquired Definitive Intelligence, a provider of AI solutions for businesses, to further enhance its technological prowess and market impact.

What is Groq’s LPU?

Groq’s Language Processing Unit (LPU) is a dedicated processor designed to improve the inference performance and accuracy of AI language models. Unlike traditional GPUs, the LPU is specialized for sequential computation tasks, eliminating bottlenecks in computation and memory bandwidth required by large language models. For instance, the LPU reduces per-word computation time compared to GPUs and does not suffer from external memory bandwidth bottlenecks, thus offering significantly better performance than graphics processors.

Technically, the LPU contains thousands of simple processing elements (PEs), arranged in a Single Instruction, Multiple Data (SIMD) array. This allows the same instructions to be executed simultaneously for each data point. Additionally, a central control unit (CU) issues instructions and manages the flow of data between the memory hierarchy and PEs, maintaining consistent synchronous communication.

The primary effect of using an LPU is the acceleration of AI and machine learning tasks. For example, Groq has run the Llama-2 70B model at a rate of 300 tokens per second per user on the LPU system, which is a notable improvement over the previous 100 tokens and 240 tokens. Thus, the LPU can process AI inference tasks in real-time with low latency and provide them in an energy-efficient package. This enables groundbreaking changes in areas such as high-performance computing (HPC) and edge computing.

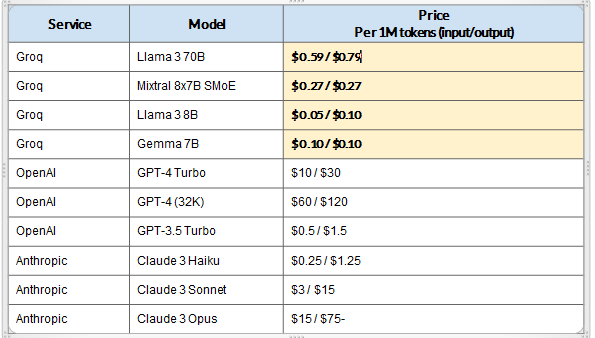

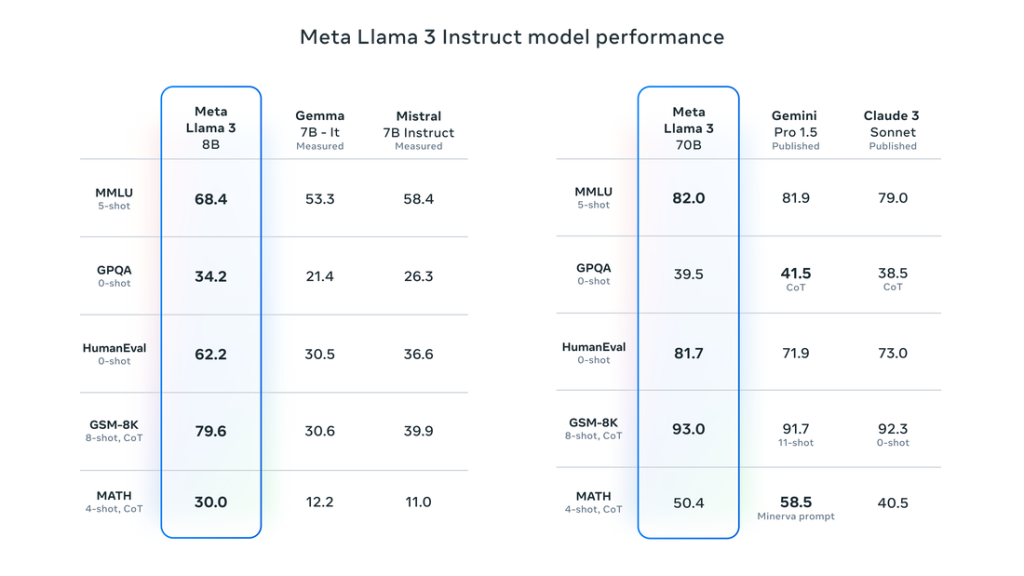

Groq’s Overwhelmingly Affordable API

Groq’s hardware resources can be accessed through an API provided by Groq. One of the notable features of Groq’s service is the incredibly low cost of using this API.

In other words, Groq is fast and affordable

The figure below, provided by ArtificialAnalysis.ai, compares API providers, mapping price on the horizontal axis and throughput (tokens per second) on the vertical axis. From this chart, it is visually evident that Groq is overwhelmingly fast and affordable, especially standing out in terms of throughput compared to other API providers.

About Llama 3

Overview and Features of Llama 3

Llama 3 is the latest large language model (LLM) developed by Meta. This AI is trained on an extensive dataset of text data, enabling it to comprehensively understand and respond to language. Llama 3 is suited for a wide array of tasks such as creating creative content, translating languages, and providing information for queries. The model is available on platforms like AWS, Databricks, and Google Cloud, serving as a foundation for developers and researchers to further advance AI. By offering Llama 3 as open source, Meta aims to enhance the transparency of the technology and foster collaboration with a broad range of developers.

Here are some features of Llama 3 as disclosed by Meta:

- Advanced Parameter Model: Llama 3 is developed as a model with a vast number of parameters, available in two versions: 8B (eight billion) and 70B (seventy billion), which allow it to perform highly in language understanding and generation.

- Industry-Standard Benchmark Evaluations: Llama 3 is evaluated using several industry-standard benchmarks such as ARC, DROP, and MMLU, achieving state-of-the-art results in these tests. Specifically, it shows significant improvements in recognition and reasoning accuracy compared to previous models.

- Multilingual Support: Llama 3 is trained with a dataset of over 15 trillion tokens that includes high-quality data for supporting over 30 languages, not only in English but also various other languages, thus catering to users worldwide.

- Tokenizer Innovation: The newly developed tokenizer has 128,000 vocabularies, enabling more efficient encoding of languages. This enhances the processing speed and accuracy of the model, allowing it to handle more complex texts appropriately.

- Improved Inference Efficiency: The adoption of Grouped Query Attention (GQA) technology has enhanced the model’s inference efficiency, enabling high-speed processing of large datasets and facilitating real-time responses.

- Enhanced Security and Reliability: New security tools like Llama Guard 2, Code Shield, and CyberSec Eval 2 have been introduced to enhance the safety of content generated by the model, minimizing the risk of producing inappropriate content.

- Open Source Availability: Llama 3 is offered entirely as open source, allowing researchers and developers worldwide to freely access, improve, and use it for the development of applications. This openness promotes further innovation.

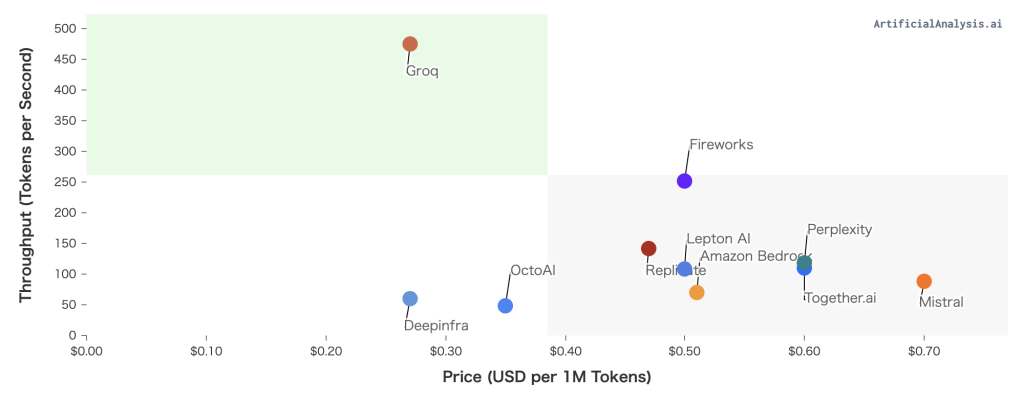

Performance Evaluation of Llama 3 According to Industry Benchmarks

Llama 3 has demonstrated remarkable performance in industry-standard benchmarks, particularly excelling in areas of language understanding, logical reasoning, and problem-solving abilities. The newly introduced models with 8 billion (8B) and 70 billion (70B) parameters show a significant improvement in understanding and response accuracy compared to the previous model, Llama 2.

Llama 3 is highly rated for its ability to generate code and perform tasks based on instructions, and these capabilities are further enhanced by Meta’s proprietary training methods. Additionally, this model supports multiple languages, delivering high-quality performance in over 30 languages.

Currently, Meta has released two versions of the Llama 3 model: Llama 3 8B and Llama 3 70B, where “8B” has 8 billion parameters and “70B” has 70 billion parameters. The figure below shows the results of the benchmark evaluations published by Meta, with the 70B model exhibiting higher performance than Gemini Pro and Claude3.

Source: https://llama.meta.com/llama3/

Comparison between Llama 3 and Llama 2

Llama 3 shows significant improvements over Llama 2 in many aspects. Specifically, the dataset used for training the model has increased sevenfold, and the data related to code has quadrupled.

As a result, the model has become more efficient at handling complex language tasks. Particularly, Llama 3 significantly surpasses the previous model in its ability to generate code and perform tasks based on instructions.

The adoption of a new tokenizer has also improved the efficiency of language encoding, contributing to an overall performance enhancement. Additionally, a design considering safety has been implemented, introducing a new filtering system to reduce the risk of generating inappropriate responses.

| Features | Llama 3 | Llama 2 |

| Parameter Count | Versions with 8 billion & 70 billion parameters | Fewer parameters (exact numbers not disclosed) |

| Benchmark Performance | Improved performance in ARC, DROP, MMLU benchmarks | Likely lower scores in the same benchmarks |

| Capabilities | Strong reasoning abilities, creative text generation | May offer similar abilities but to a lesser extent |

| Availability | Publicly available for research | Details on availability unclear, probably less publicly accessible |

Trying Out Llama 3 with Groq

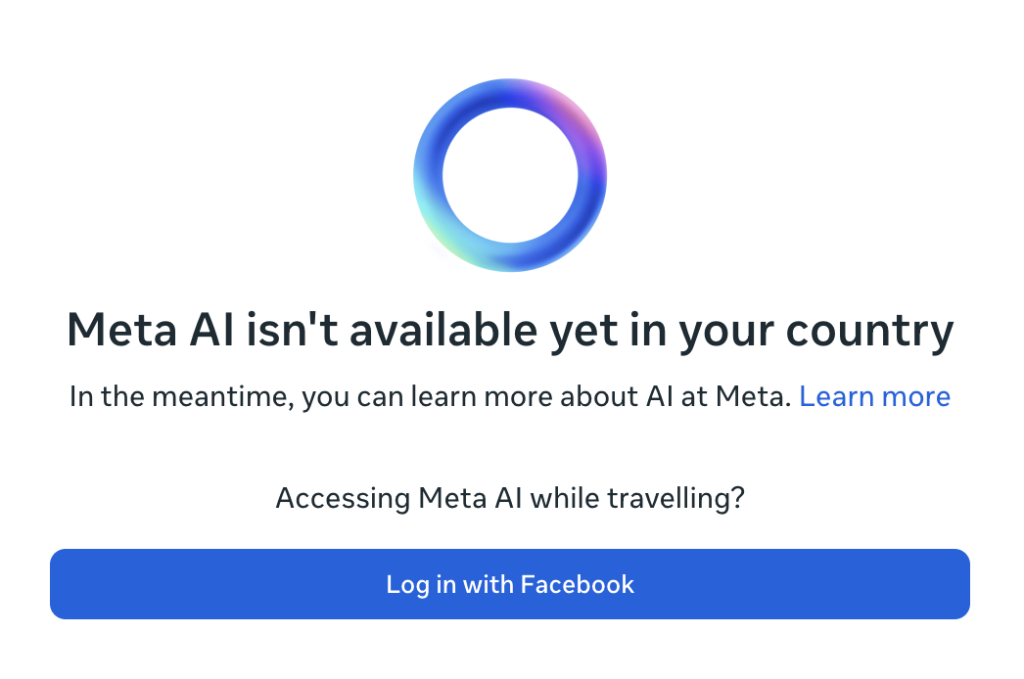

As of now, “Meta AI” is not yet available in Japan

Ideally, one would be able to experience fast image generation and chatting using Llama 3 through the AI assistant “Meta AI” released by Meta. However, as of May 1, 2024, when this article was written, Meta AI is only available in English-speaking regions and has not been released in Japan.

Trying Llama 3 with Groq

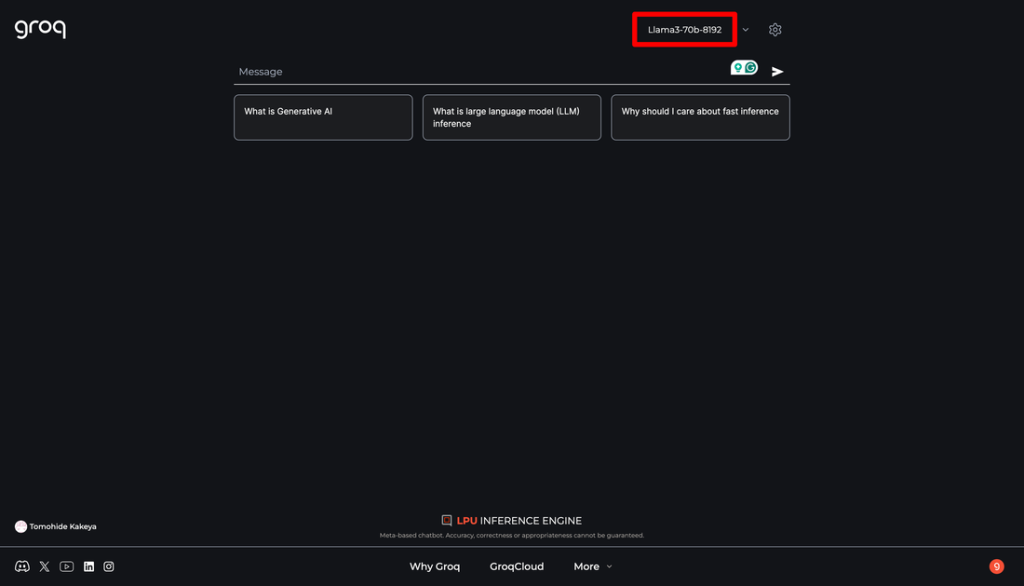

Nevertheless, since the aforementioned Groq supports Llama 3, you can experience Llama 3 by logging into Groq. Moreover, it’s super fast and free!

The usage is simple: just log into Groq, and select the model from the dropdown menu in the upper right corner. For the sake of trying (?), let’s choose the 70B model.

Here is a casual instruction I tried. Even if specified, the output is in English, so from the gear icon in the upper right, you can set the System Prompt to output in Japanese beforehand.

The throughput is about 300 tokens per second. You should be able to tell that the output is incredibly fast. However, it is a prompt for generating short stories, and I felt the precision was not good as the same sentences were repeatedly generated.

Additionally, the Groq interface is not very practical for actual use because pressing the enter key when confirming the conversion of Japanese text finalizes the chat input.

Trying the Browser Version of Chatbot UI

While searching for a good method, I found something called Chatbot UI, which is compatible with Groq’s API, enabling the use of Llama 3 through Groq.

Chatbot UI has a ChatGPT-like interface that allows switching between various LLMs.

Chatbot UI is open-source and can be run on a local PC, but a browser version is also available. Since setting up a local environment was cumbersome, I decided to use the browser version for now.

To use multiple LLMs in Chatbot UI, you need to connect to each LLM’s API, which requires obtaining API keys first. For this instance, I want to compare ChatGPT, Claude, and Groq (Llama 3), so I will obtain API keys from OpenAI, Anthropic, and Groq. Besides the API Key, you also need the Model ID and Base URL (optional) for the API connection.

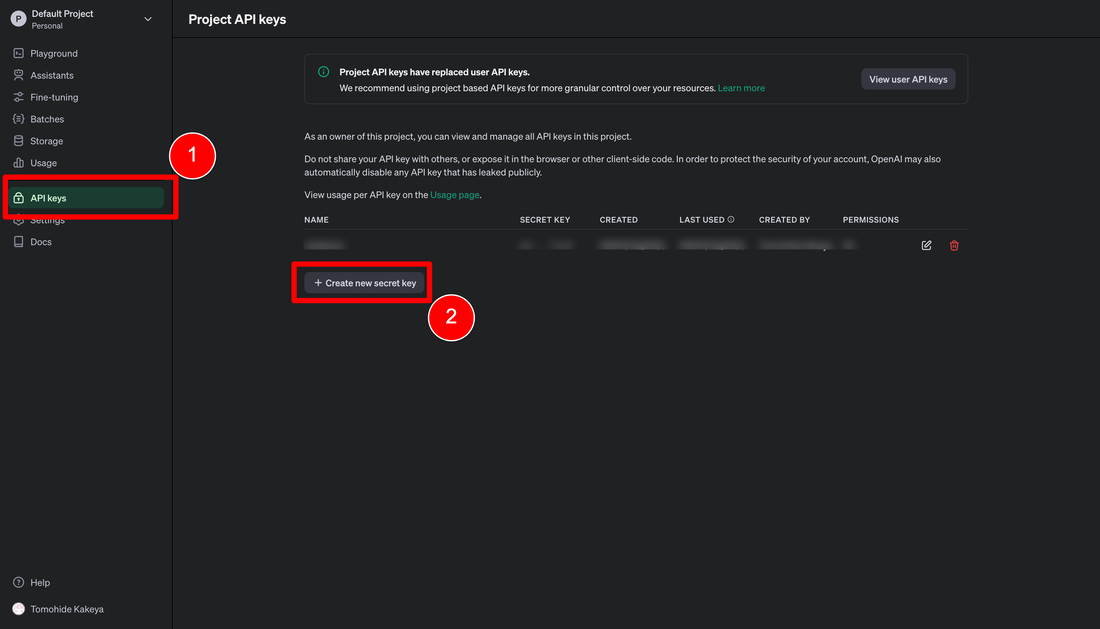

Obtaining OpenAI’s API Key

You can issue it from the “API keys” menu in the OpenAI management screen.

There’s a button called “Create new secret key”; clicking it will open a popup. Once you enter the necessary information, the API Key will be issued. Make sure to save this key securely, as you cannot check it later.

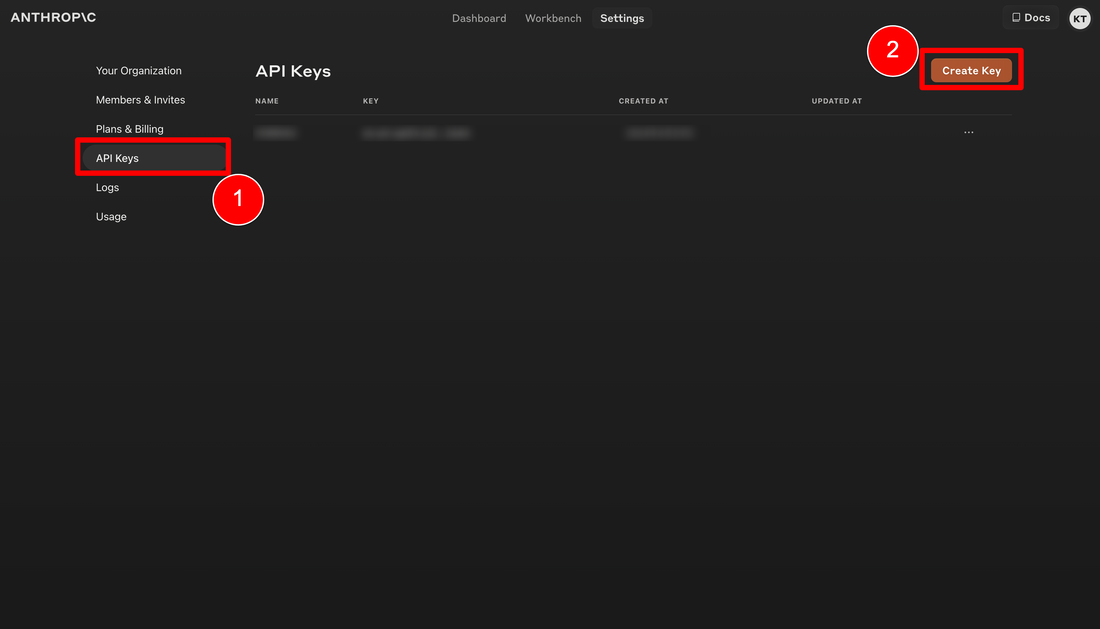

Obtaining Anthropic’s API Key

You can obtain it in almost the same way as OpenAI. It can be issued from the “API Keys” menu in the Anthropic management screen. Click the “Create Key” button, a popup will open, and entering a name for the key will issue it.

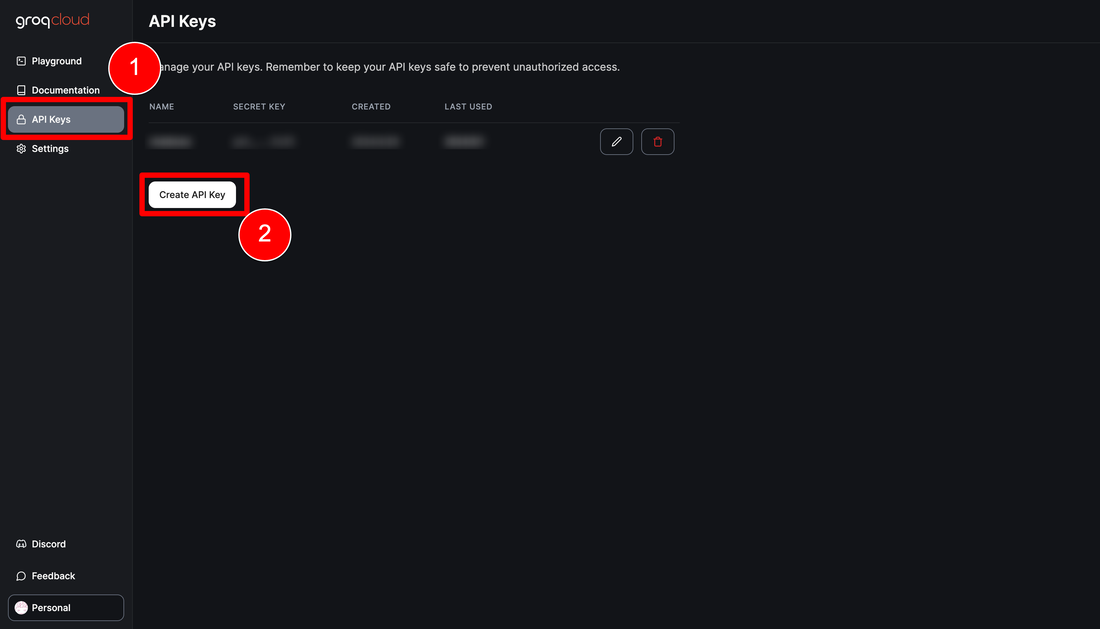

Obtaining Groq’s API Key

The method to obtain Groq’s API Key is similar to the above.

There is a link called “GroqCloud” at the bottom of the Groq screen you logged into earlier. Clicking this link opens the management screen, where you can issue a key from the “Create API Key” in the “API Keys” menu.

Logging into the Browser Version of Chatbot UI

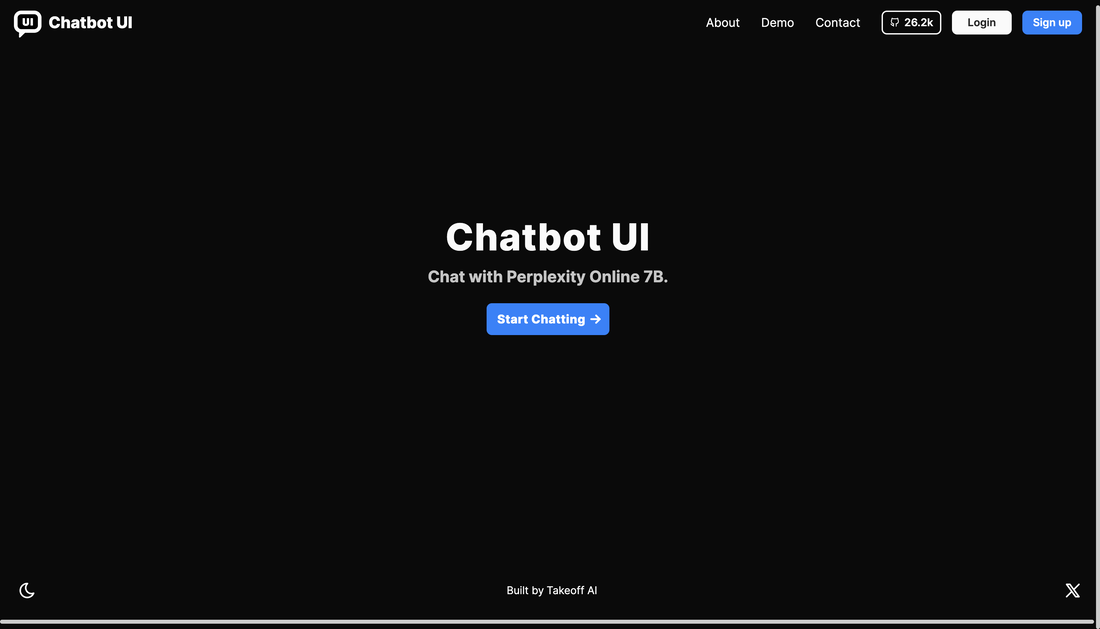

Now that everything is set up, let’s log into the browser version of Chatbot UI.

When you access the browser version of Chatbot UI, the initial screen displayed will have a “Start Chatting” button. Click this button to proceed.

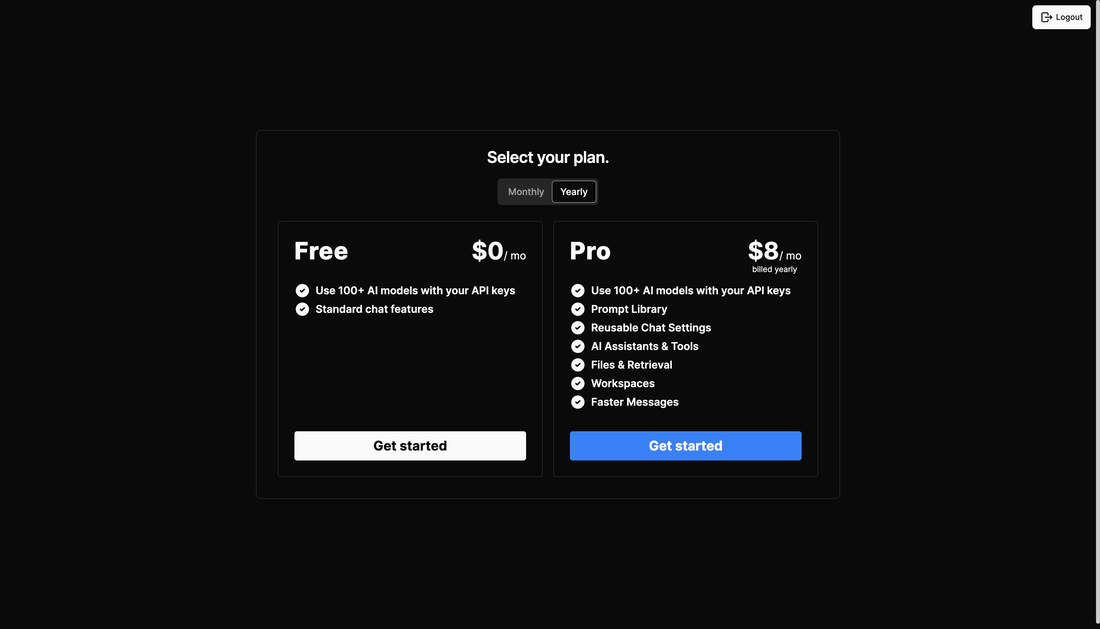

I wanted to use the file search function for performance comparison, so I opted for the paid plan. It’s $8 per month with an annual contract, or $10 per month with monthly renewals.

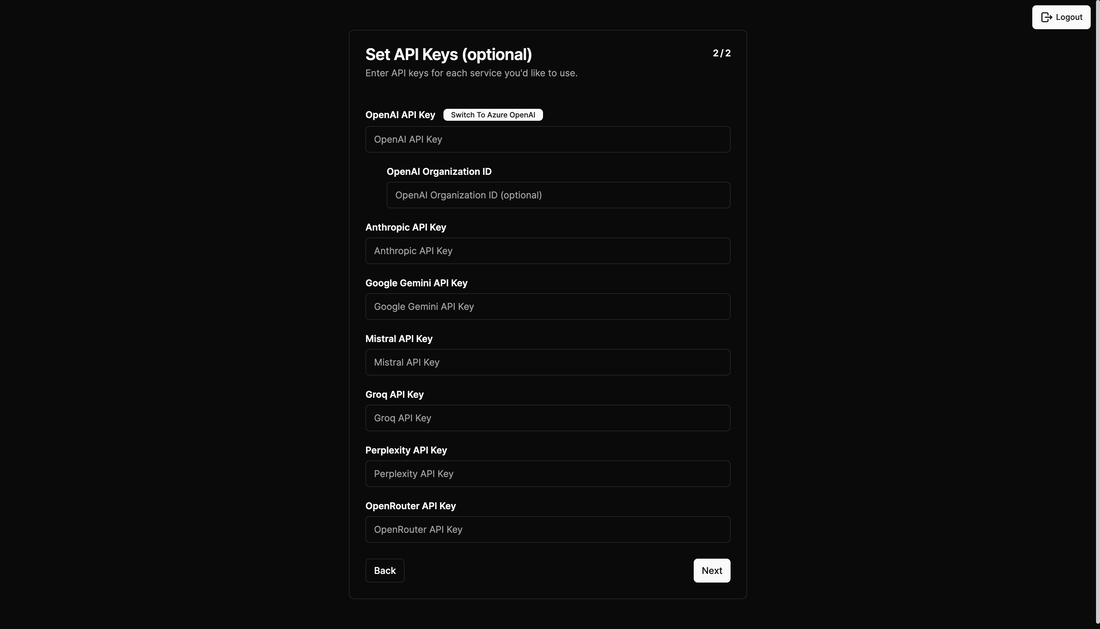

Once the payment setup is complete, you will be taken to a screen to register the API Key as shown above.

Enter the keys you obtained earlier from OpenAI, Anthropic, and Groq. This completes the initial registration, and the chat screen will open.

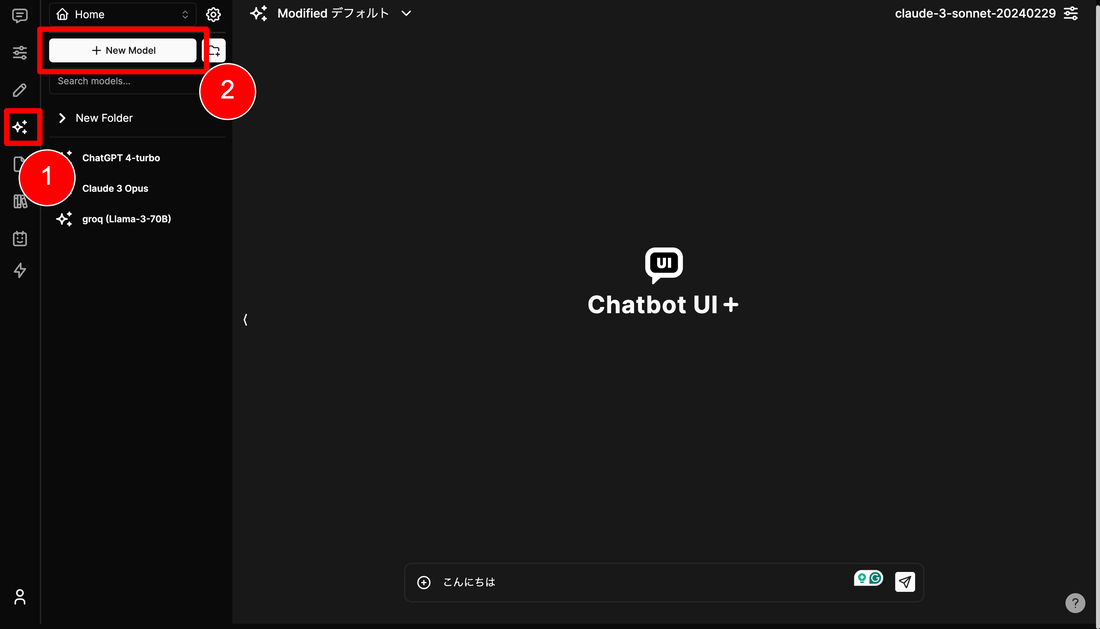

Next, you need to register the model you want to use (the previous API Key registration was not enough to use them). The sparkly mark on the left (?) is the model menu; click the “New Model” button to register the model you want to use. This time, I entered the following:

- ChatGPT 4-turbo

- Name: ChatGPT 4-turbo (whatever you can identify)

- Model ID: gpt-4-turbo

- Base URL: none

- API Key: the one you obtained earlier

- Claude 3 Opus

- Name: Claude 3 Opus (whatever you can identify)

- Model ID: claude-3-opus-20240229

- Base URL: https://api.anthropic.com/v1

- API Key: the one you obtained earlier

- Groq (Llama-3-70B)

- Name: Groq (Llama-3-70B) (whatever you can identify)

- Model ID: llama3-70b-8192

- Base URL: https://api.groq.com/openai/v1

- API Key: the one you obtained earlier

You can refer to the following for the Model ID:

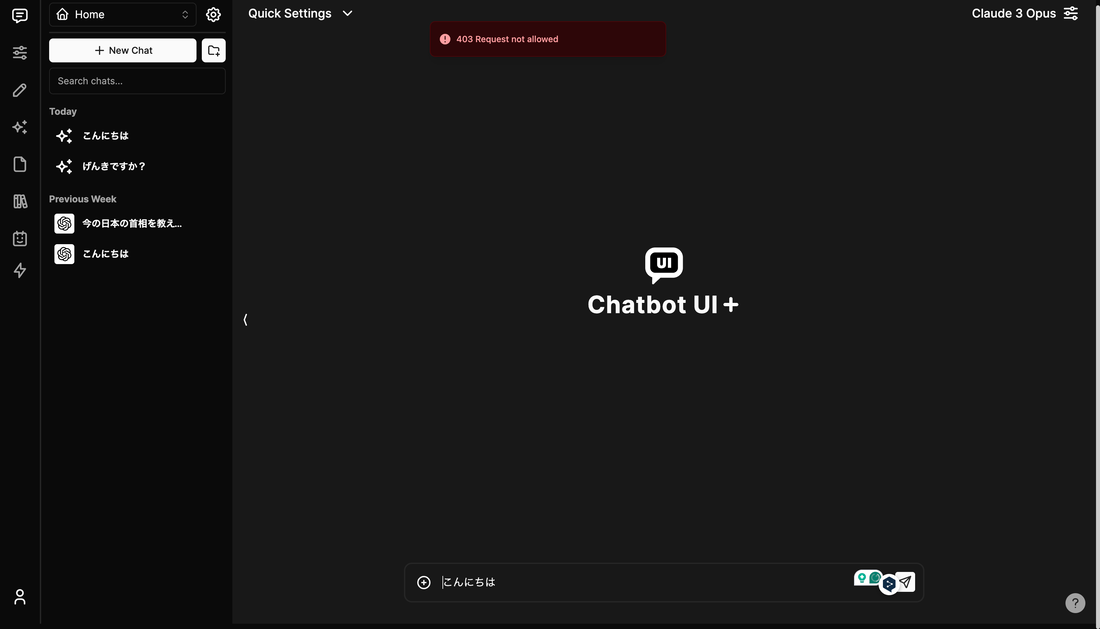

Having set this up, GPT4 and Groq worked, but there was an error with Claude as described above. Despite trying to change the Base URL and Model ID, it wasn’t resolved, so I gave up and decided to build a local version instead… If anyone has managed to get Claude 3 Opus working in the browser, I would be happy to hear from you.

Trying the Local Version of Chatbot UI

Setting Up the Local Environment for Chatbot UI

The local version can be set up easily using the following steps. It worked by following the instructions in the GitHub repository by developer Mckay Wrigley. Execute the following commands in the Mac terminal to set it up.

1. Clone the Repository

First, clone the Chatbot UI repository from GitHub.

$ git clone https://github.com/mckaywrigley/chatbot-ui.git

2. Install Dependencies

Navigate to the cloned directory and install the necessary dependencies.

$ cd chatbot-ui

$ npm install

3. Install Docker

Docker is required to run Supabase locally. Install Docker from the official site.

4. Install Supabase CLI

To install the Supabase CLI, execute the following command.

$ brew install supabase/tap/supabase

5. Start Supabase

Start Supabase by executing the following command.

$ supabase start

6. Set Environment Variables

Copy the .env.local.example file to .env.local and set the necessary values.

$ cp .env.local.example .env.local

7. Check Supabase Related Information

Enter the necessary values such as the API URL in the .env.local file.

$ supabase status

[Results of supabase status]

$ vi ./.env.local

- NEXT_PUBLIC_SUPABASE_URL: Specify the “API URL” from the status

- NEXT_PUBLIC_SUPABASE_ANON_KEY: Specify the “anon key” from the status

- SUPABASE_SERVICE_ROLE_KEY: Specify the “service_role key” from the status

8. Set Up the Database

Edit the SQL file for initial setup in Supabase. Refer to the supabase status results for specific values. In my case, it worked without any changes. Just make sure that the “service_role_key” in line 54 matches the service_role_key from the supabase status results.

9. Run the Application Locally

Execute the following command to start the application locally.

$ npm run chat

Now, you can access the Chatbot UI application in the browser at http://localhost:3000.

Trying Llama 3 via Groq on Chatbot UI

When you access http://localhost:3000, an initial setup screen similar to the browser version will be displayed. Register in the same way and content as before.

However, Claude 3 Opus showed a “404 Not Found” error and could not be used. Claude 3 Sonnet and Claude 3 Haiku, which can be specified by default, worked normally, so it seems that Chatbot UI may not support Opus yet.

Let’s specify “Groq (Llama 3)” as the model, and enter some prompts to see the output of Llama 3.

First, I asked a question related to the recently emerging mysterious high-performance AI “GPT2,” which has no definitive answer.

The response was quite ambiguous.

Next, let’s check the speed of the output.

It’s undeniably fast! Even including the API communication time, there is no stress of waiting at all.

Llama 3 vs GPT-4 Turbo vs Claude 3 Sonnet – 3 Match Battle!

Let’s input the same three prompts to Llama 3, GPT-4 Turbo, and Claude 3 Sonnet and compare the outputs.

Generating a Text to Explain a Keyword

The prompt is:

“What is Retrieval-Augmented Generation (RAG)? Summarize it in about 500 characters in a way that a fourth-year university student majoring in computer engineering can understand.”

Comparison of Outputs (from left: Llama 3, GPT-4 Turbo, Claude 3 Sonnet. Prompts confirmed from right in double speed. Double speed.)

Comparison of Output Results (from left: Llama 3, GPT-4 Turbo, Claude 3 Sonnet)

There was no significant difference in the precision of the text output by any of them. However, the output speed was overwhelmingly faster with Groq.

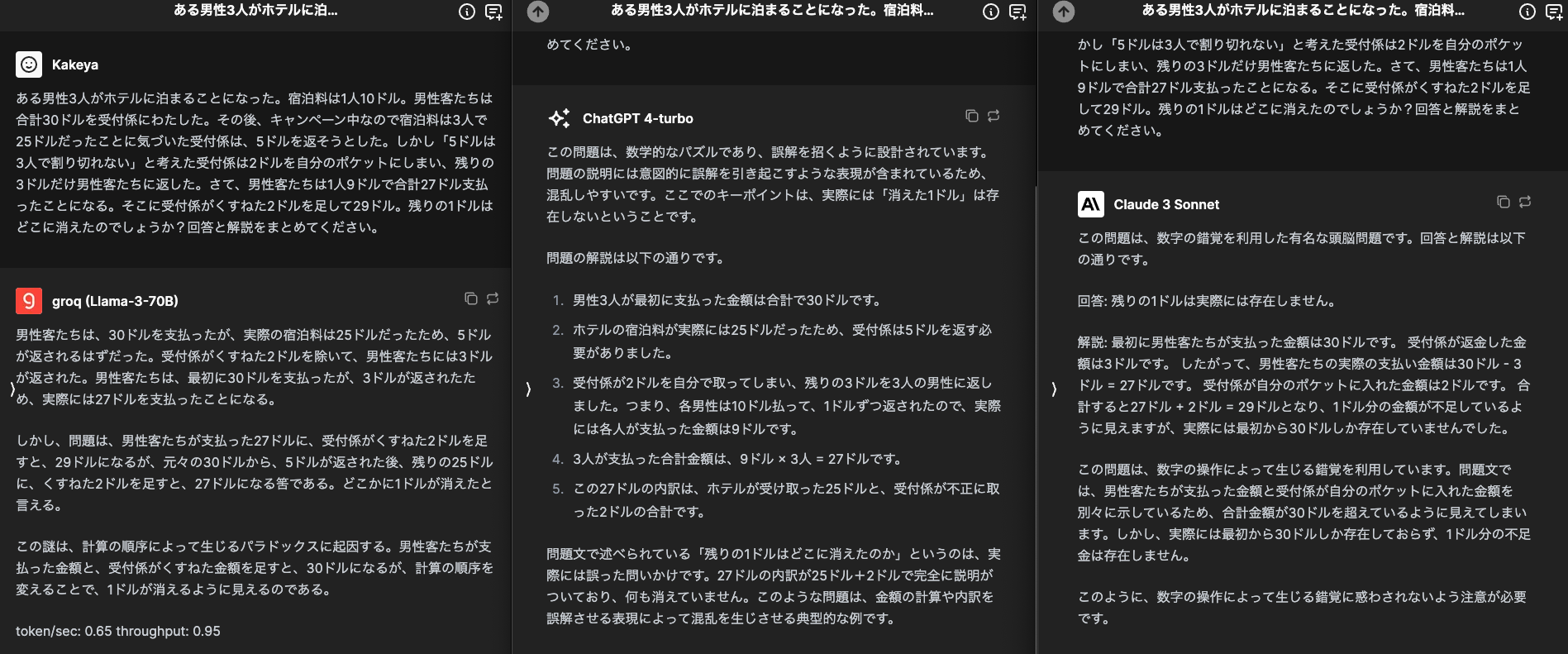

Solving a Logical Quiz

Here is a typical logical quiz. The prompt is:

“Three men stay at a hotel. The room rate is $10 per person. The guests paid a total of $30 to the receptionist. Later, the receptionist realized that there was a campaign and the room rate was $25 for three people, so they decided to return $5. However, the receptionist thought that $5 couldn’t be evenly divided among three people, so they put $2 in their pocket and returned $3 to the guests. The guests paid $9 each, totaling $27. Adding the $2 the receptionist pocketed makes $29. Where did the remaining $1 go? Provide an answer and explanation.”

Comparison of Outputs (from left: Llama 3, GPT-4 Turbo, Claude 3 Sonnet. Prompts confirmed from right in double speed. Double speed.)

Comparison of Output Results (from left: Llama 3, GPT-4 Turbo, Claude 3 Sonnet)

The result was a complete victory for GPT-4 Turbo.

GPT-4 Turbo provided a logical and clear explanation of the answer.

Claude 3 Sonnet also provided an explanation, but it felt somewhat unclear in its reasoning. Llama 3 did not explain the essential “why,” so it was judged to be unsuccessful.

Summary of an Uploaded Article

I uploaded a paper about a model called RAPTOR that improves document retrieval accuracy in RAG and asked for a summary. The prompt is:

“Summarize the content of the uploaded PDF file in a way that a fourth-year university student majoring in computer engineering can understand, using as concrete and quantitative expressions as possible, in about 1000 characters in Japanese. Please briefly summarize the technical content focusing on important points and clearly state what is innovative and what is superior compared to conventional technology.”

Due to the considerable time it took to upload the file and the length of the output, I will paste the generated text directly below instead of screenshots.

ーーー

<Llama 3>

The provided text appears to be a collection of research papers and articles related to natural language processing, specifically focusing on language models, question answering, and text summarization. Here is a summary of the main points:

Language Models: The text discusses the development of language models, such as GPT-4, that can generate coherent and context-specific text. These models are trained on large datasets and can be fine-tuned for specific tasks.

Question Answering: The text explores various question answering models, including UnifiedQA, that can retrieve relevant information from a large corpus of text. These models are evaluated on datasets such as QuALITY, QASPER, and NarrativeQA.

Text Summarization: The text touches on the topic of text summarization, highlighting the importance of summarizing long documents and the challenges associated with it. Models such as Longformer and Hybrid Hierarchical Retrieval are mentioned as approaches to tackle this task.

Retrieval-Augmented Generation: The text discusses the concept of retrieval-augmented generation, which involves using a retrieval mechanism to augment language models with relevant information from a knowledge base.

Evaluation Metrics: The text mentions various evaluation metrics used to assess the performance of language models and question answering systems, including BLEU score, ROUGE score, and METEOR score.

Applications: The text hints at the potential applications of these technologies in areas such as information retrieval, text classification, and chatbots.

In summary, the text provides an overview of the current state of natural language processing research, highlighting advancements in language models, question answering, and text summarization.

ーーー

<GTP 4 Turbo>

Summarizing the content of the PDF file, it describes research on a new information retrieval system called “RAPTOR.” This system can provide more relevant and comprehensive information compared to traditional retrieval systems. Particularly, RAPTOR can obtain detailed explanations of not only short descriptions but also the main parts of a story, improving performance in downstream tasks.

Specifically, comparing RAPTOR with traditional DPR (Dense Passage Retrieval), RAPTOR is said to be able to generate answers considering a broader context. For example, in the story of Cinderella, DPR generates answers considering only the early part of the story, while RAPTOR captures the theme of the story from a more holistic perspective, providing a more complete answer.

The innovation of this technology lies in its ability to efficiently extract important parts of information, especially in long documents or texts containing complex information, enabling more accurate information provision. This allows users to access the information they seek quickly and accurately.

Additionally, the introduction of RAPTOR is expected to significantly improve the accuracy and speed of information retrieval compared to conventional technologies. This would be a significant advantage, especially for companies and research institutions dealing with large amounts of data.

This research represents an important advancement in the field of information retrieval and is expected to have many more applications in the future. For a student majoring in computer engineering, understanding such cutting-edge technology and exploring its potential will be a highly valuable experience.

ーーー

<Claude 3 Sonnet>

To summarize the content of this PDF, it presents the following points:

The innovative long-text retrieval and summarization technology, RAPTOR, is introduced. Compared to traditional retrieval methods, it can accurately retrieve more relevant information from entire documents, significantly improving the accuracy of question-answering tasks.

Specifically, the following three points are innovative:

It adopts an efficient retrieval method that considers the hierarchical structure of documents, enabling the precise extraction of relevant information even from large, long documents.

It naturally summarizes the retrieved information, generating top-level nodes that can directly answer questions.

Combined with the large language model GPT-4, it achieves high question-answering accuracy.

Evaluation experiments show that the question-answering accuracy on the NarrativeQA dataset has significantly improved compared to traditional methods. In extracting information and answering questions from long documents, RAPTOR has achieved groundbreaking results.

ーーー

Even though I specified “in Japanese,” Llama 3 responded in English… However, Llama 3 seems to have the highest technical specificity. It feels like a draw between Llama 3 and GPT-4 Turbo, with Claude 3 Sonnet coming in next.

Summary

The article has become quite long as I tried various things, but to summarize, here are my impressions:

- Groq is fast…! And affordable…!

- Chatbot UI is convenient…!

- Although Llama 3 was rated slightly better than Claude 3 Sonnet in benchmarks, it feels almost equivalent in practice. However, it is amazing that this is available as open source…!