The true potential of Large Language Models (LLMs) is unlocked when they can interact with specific, private, and up-to-date data outside their initial training corpus. This is the core principle of Retrieval-Augmented Generation (RAG). The Gemini File Search Tool is Google’s dedicated solution for enabling RAG, providing a fully managed, scalable, and reliable system to ground the Gemini model in your own proprietary documents.

This guide serves as a complete walkthrough (AI Quest Type 2): we’ll explore the tool’s advanced features, demonstrate its behavior via the official demo, and provide a detailed, working Python code sample to show you exactly how to integrate RAG into your applications.

1. Core Features and Technical Advantage

1.1. Why Use a Managed RAG Solution?

Building a custom RAG pipeline involves several complex, maintenance-heavy steps: chunking algorithms, selecting and running an embedding model, maintaining a vector store (like Vector Database or Vector Store), and integrating the search results back into the prompt.

The Gemini File Search Tool eliminates this complexity by providing a fully managed RAG pipeline:

- Automatic Indexing: When you upload a file, the system automatically handles document parsing, chunking, and generating vector embeddings using a state-of-the-art model.

- Scalable Storage: Files are stored and indexed in a dedicated File Search Store—a persistent, highly available vector repository managed entirely by Google.

- Zero-Shot Tool Use: You don’t write any search code. You simply enable the tool, and the Gemini model automatically decides when to call the File Search service to retrieve context, ensuring optimal performance.

1.2. Key Features

- Semantic Search: Unlike simple keyword matching, File Search uses the generated vector embeddings to understand the meaning and intent (semantics) of your query, fetching the most relevant passages, even if the phrasing is different.

- Built-in Citations: Crucially, every generated answer includes clear **citations (Grounding Metadata)** that point directly to the source file and the specific text snippet used. This ensures **transparency and trust**.

- Broad File Support: Supports common formats including

PDF,DOCX,TXT,JSON, and more.

2. Checking Behavior via the Official Demo App: A Visual RAG Walkthrough 🔎

This section fulfills the requirement to check the behavior by demo app using a structured test scenario. The goal is to visibly demonstrate how the Gemini model uses the File Search Tool to become grounded in your private data, confirming that RAG is active and reliable.

2.1. Test Scenario Preparation

To prove that the model prioritizes the uploaded file over its general knowledge, we’ll use a file containing specific, non-public details.

Access: Go to the “Ask the Manual” template on Google AI Studio: https://aistudio.google.com/apps/bundled/ask_the_manual?showPreview=true&showAssistant=true.

Test File (Pricing_Override.txt):

Pricing_Override.txt content:

The official retail price for Product X is set at $10,000 USD.

All customer service inquiries must be directed to Ms. Jane Doe at extension 301.

We currently offer an unlimited lifetime warranty on all purchases.2.2. Step-by-Step Execution and Observation

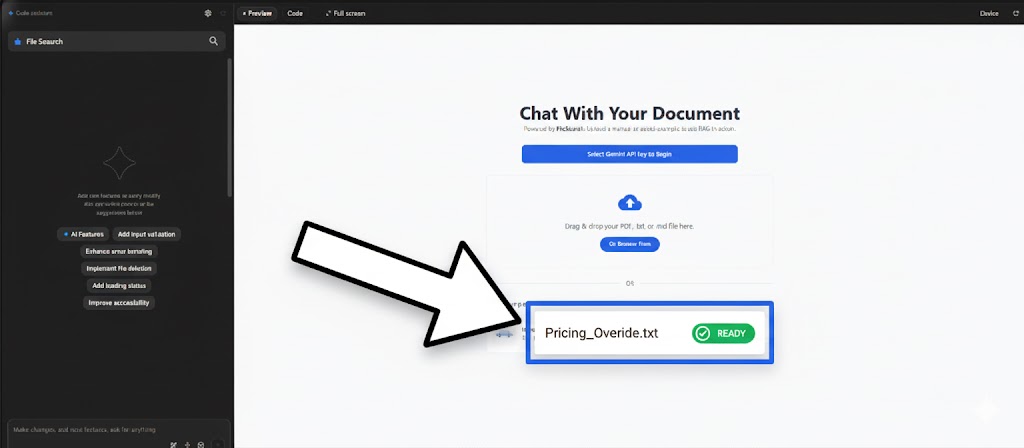

Step 1: Upload the Source File

Navigate to the demo and upload the Pricing_Override.txt file. The File Search system indexes the content, and the file should be listed as “Ready” or “Loaded” in the interface, confirming the source is available for retrieval.

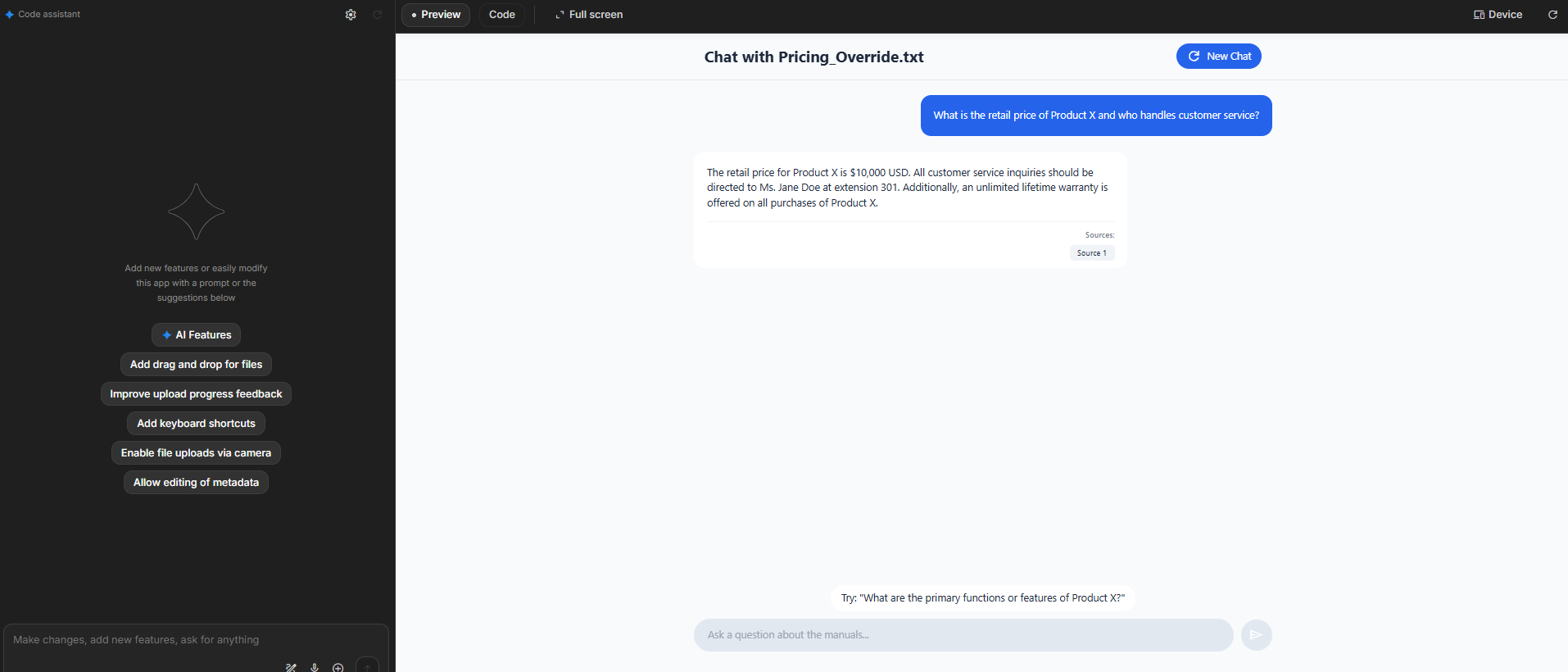

Step 2: Pose the Retrieval Query

Ask a question directly answerable only by the file: “What is the retail price of Product X and who handles customer service?” The model internally triggers the File Search Tool to retrieve the specific price and contact person from the file’s content.

Step 3: Observe Grounded Response & Citation

Observe the model’s response. The Expected RAG Behavior is crucial: the response must state the file-specific price ($10,000 USD) and contact (Ms. Jane Doe), followed immediately by a citation mark (e.g., [1] The uploaded file). This confirms the answer is grounded.

![Image of the Gemini AI Studio interface showing the model's response with price and contact, and a citation [1] linked to the uploaded file](https://scuti.asia/wp-content/uploads/2025/11/Screenshot-2025-11-20-110054-1.png)

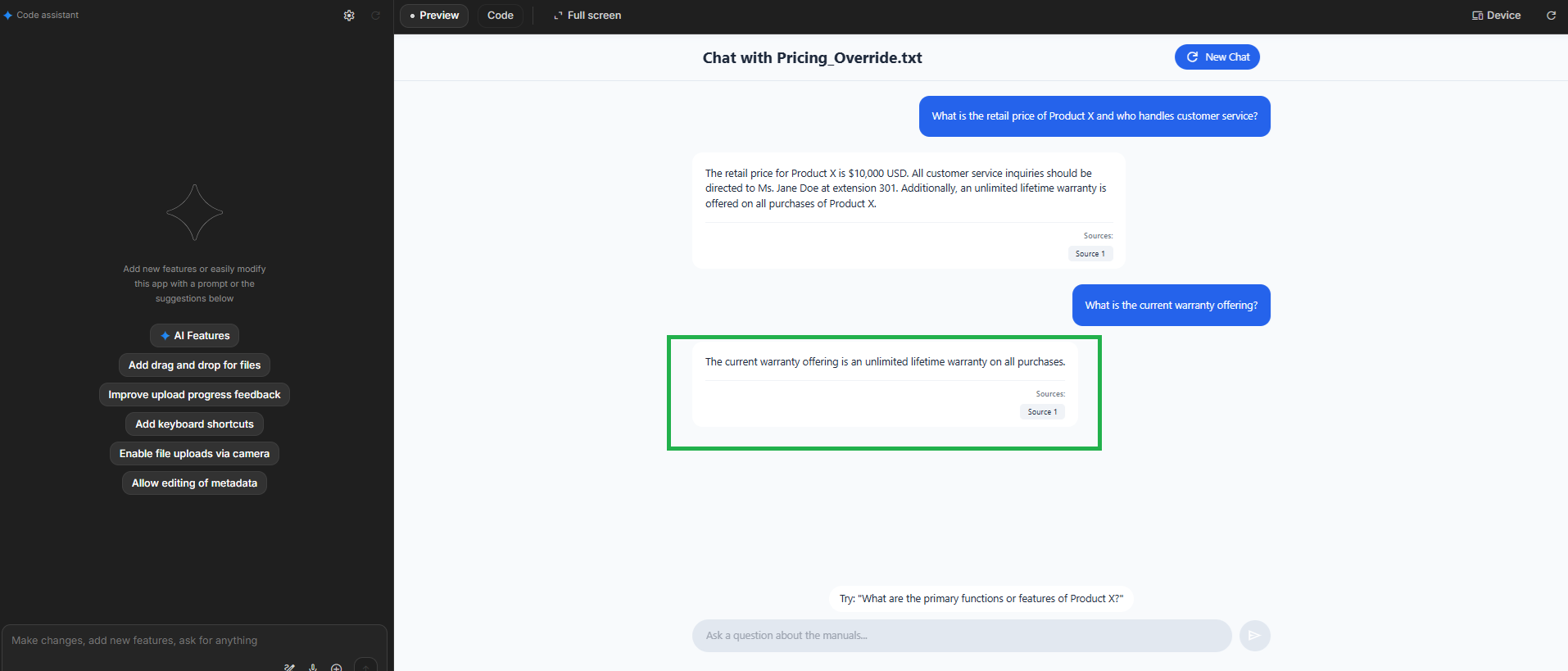

Step 4: Verify Policy Retrieval

Ask a supplementary policy question: “What is the current warranty offering?” The model successfully retrieves and restates the specific policy phrase from the file, demonstrating continuous access to the knowledge base.

Conclusion from Demo

This visual walkthrough confirms that the **File Search Tool is correctly functioning as a verifiable RAG mechanism**. The model successfully retrieves and grounds its answers in the custom data, ensuring accuracy and trust by providing clear source citations.

3. Getting Started: The Development Workflow

3.1. Prerequisites

- Gemini API Key: Set your key as an environment variable:

GEMINI_API_KEY. - Python SDK: Install the official Google GenAI library:

pip install google-genai3.2. Three Core API Steps

The integration workflow uses three distinct API calls:

| Step | Method | Purpose |

|---|---|---|

| 1. Create Store | client.file_search_stores.create() |

Creates a persistent container (the knowledge base) where your file embeddings will be stored. |

| 2. Upload File | client.file_search_stores.upload_to_file_search_store() |

Uploads the raw file, triggers the LRO (Long-Running Operation) for indexing (chunking, embedding), and attaches the file to the Store. |

| 3. Generate Content | client.models.generate_content() |

Calls the Gemini model (gemini-2.5-flash), passing the Store name in the tools configuration to activate RAG. |

4. Detailed Sample Code and Execution (Make sample code and check how it works)

This Python code demonstrates the complete life cycle of a RAG application, from creating the store to querying the model and cleaning up resources.

A. Sample File Content: service_guide.txt

The new account registration process includes the following steps: 1) Visit the website. 2) Enter email and password. 3) Confirm via the email link sent to your inbox. 4) Complete the mandatory personal information. The monthly cost for the basic service tier is $10 USD. The refund policy is valid for 30 days from the date of purchase. For support inquiries, please email [email protected].B. Python Code (gemini_file_search_demo.py)

(The code block is presented as a full script for easy reference and testing.)

import os

import time

from google import genai

from google.genai import types

from google.genai.errors import APIError

# --- Configuration ---

FILE_NAME = "service_guide.txt"

STORE_DISPLAY_NAME = "Service Policy Knowledge Base"

MODEL_NAME = "gemini-2.5-flash"

def run_file_search_demo():

# Helper to create the local file for upload

if not os.path.exists(FILE_NAME):

file_content = """The new account registration process includes the following steps: 1) Visit the website. 2) Enter email and password. 3) Confirm via the email link sent to your inbox. 4) Complete the mandatory personal information. The monthly cost for the basic service tier is $10 USD. The refund policy is valid for 30 days from the date of purchase. For support inquiries, please email [email protected]."""

with open(FILE_NAME, "w") as f:

f.write(file_content)

file_search_store = None # Initialize for cleanup in finally block

try:

print("💡 Initializing Gemini Client...")

client = genai.Client()

# 1. Create the File Search Store

print(f"\n🚀 1. Creating File Search Store: '{STORE_DISPLAY_NAME}'...")

file_search_store = client.file_search_stores.create(

config={'display_name': STORE_DISPLAY_NAME}

)

print(f" -> Store Created: {file_search_store.name}")

# 2. Upload and Import File into the Store (LRO)

print(f"\n📤 2. Uploading and indexing file '{FILE_NAME}'...")

operation = client.file_search_stores.upload_to_file_search_store(

file=FILE_NAME,

file_search_store_name=file_search_store.name,

config={'display_name': f"Document {FILE_NAME}"}

)

while not operation.done:

print(" -> Processing file... Please wait (5 seconds)...")

time.sleep(5)

operation = client.operations.get(operation)

print(" -> File successfully processed and indexed!")

# 3. Perform the RAG Query

print(f"\n💬 3. Querying model '{MODEL_NAME}' with your custom data...")

questions = [

"What is the monthly fee for the basic tier?",

"How do I sign up for a new account?",

"What is the refund policy?"

]

for i, question in enumerate(questions):

print(f"\n --- Question {i+1}: {question} ---")

response = client.models.generate_content(

model=MODEL_NAME,

contents=question,

config=types.GenerateContentConfig(

tools=[

types.Tool(

file_search=types.FileSearch(

file_search_store_names=[file_search_store.name]

)

)

]

)

)

# 4. Print results and citations

print(f" 🤖 Answer: {response.text}")

if response.candidates and response.candidates[0].grounding_metadata:

print(" 📚 Source Citation:")

# Process citations, focusing on the text segment for clarity

for citation_chunk in response.candidates[0].grounding_metadata.grounding_chunks:

print(f" - From: '{FILE_NAME}' (Snippet: '{citation_chunk.text_segment.text}')")

else:

print(" (No specific citation found.)")

except APIError as e:

print(f"\n❌ [API ERROR] Đã xảy ra lỗi khi gọi API: {e}")

except Exception as e:

print(f"\n❌ [LỖI CHUNG] Đã xảy ra lỗi không mong muốn: {e}")

finally:

# 5. Clean up resources (Essential for managing quota)

if file_search_store:

print(f"\n🗑️ 4. Cleaning up: Deleting File Search Store {file_search_store.name}...")

client.file_search_stores.delete(name=file_search_store.name)

print(" -> Store successfully deleted.")

if os.path.exists(FILE_NAME):

os.remove(FILE_NAME)

print(f" -> Deleted local sample file '{FILE_NAME}'.")

if __name__ == "__main__":

run_file_search_demo()C. Demo Execution and Expected Output 🖥️

When running the Python script, the output demonstrates the successful RAG process, where the model’s responses are strictly derived from the service_guide.txt file, confirmed by the citations.

💡 Initializing Gemini Client...

...

-> File successfully processed and indexed!

💬 3. Querying model 'gemini-2.5-flash' with your custom data...

--- Question 1: What is the monthly fee for the basic tier? ---

🤖 Answer: The monthly cost for the basic service tier is $10 USD.

📚 Source Citation:

- From: 'service_guide.txt' (Snippet: 'The monthly cost for the basic service tier is $10 USD.')

--- Question 2: How do I sign up for a new account? ---

🤖 Answer: To sign up, you need to visit the website, enter email and password, confirm via the email link, and complete the mandatory personal information.

📚 Source Citation:

- From: 'service_guide.txt' (Snippet: 'The new account registration process includes the following steps: 1) Visit the website. 2) Enter email and password. 3) Confirm via the email link sent to your inbox. 4) Complete the mandatory personal information.')

--- Question 3: What is the refund policy? ---

🤖 Answer: The refund policy is valid for 30 days from the date of purchase.

📚 Source Citation:

- From: 'service_guide.txt' (Snippet: 'The refund policy is valid for 30 days from the date of purchase.')

🗑️ 4. Cleaning up: Deleting File Search Store fileSearchStores/...

-> Store successfully deleted.

-> Deleted local sample file 'service_guide.txt'.Conclusion

The **Gemini File Search Tool** provides an elegant, powerful, and fully managed path to RAG. By abstracting away the complexities of vector databases and indexing, it allows developers to quickly build **highly accurate, reliable, and grounded AI applications** using their own data. This tool is essential for anyone looking to bridge the gap between general AI capabilities and specific enterprise knowledge.