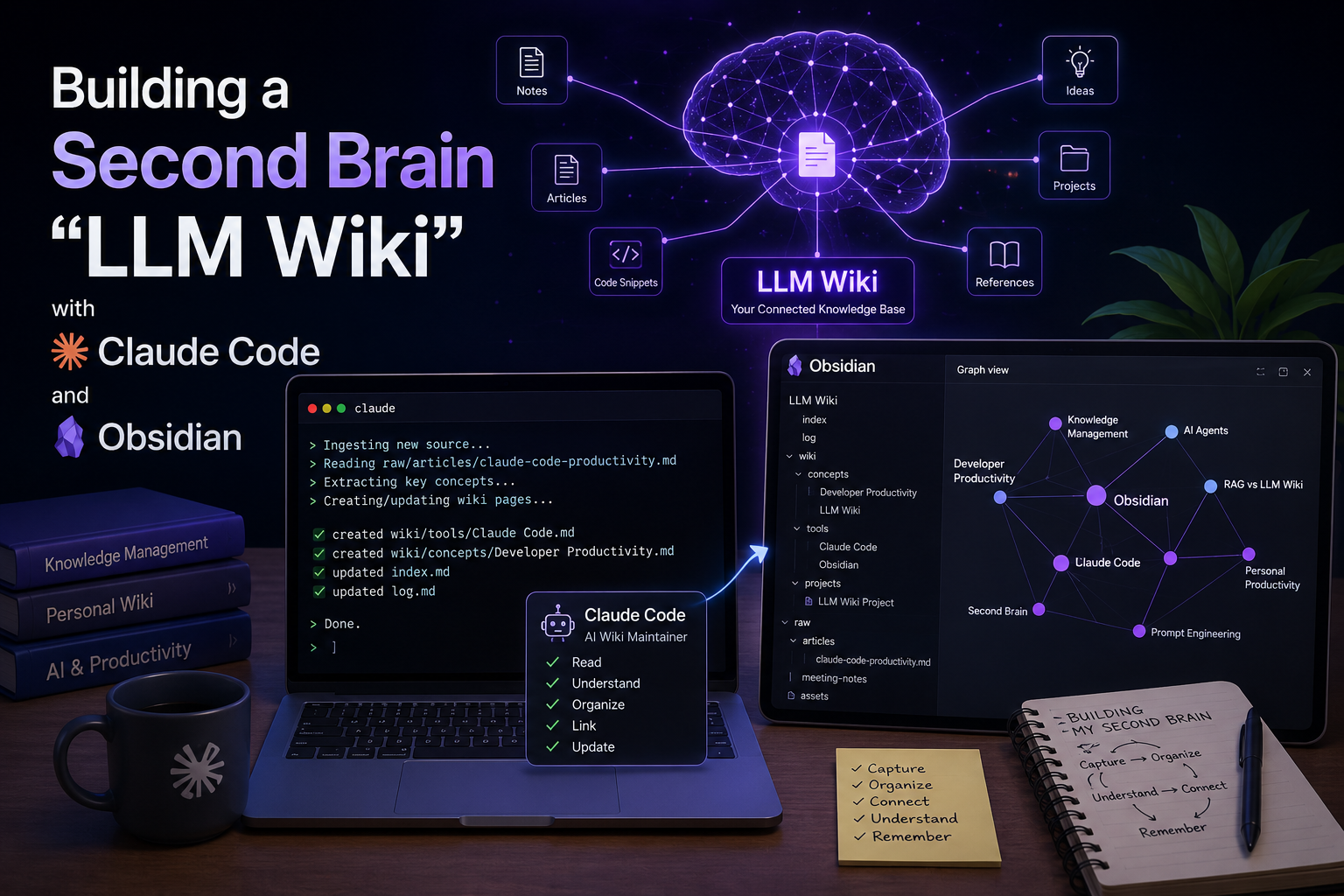

1. Introduction Recently, I explored the idea of an “LLM Wiki”, inspired by Andrej Karpathy’s proposal for building personal knowledge bases using LLMs. The...

We make services people love by the power of Gen AI.